The Context Cost: Why Context Management is the Hardest Problem in Local AI

When you build a local-first AI application, you hit a brutal hardware constraint almost immediately: the context window.

In the world of cloud APIs like GPT-4, you can blindly dump 128,000 tokens into a prompt. You can pass entire codebases, dozens of tools, and months of chat history without breaking a sweat.

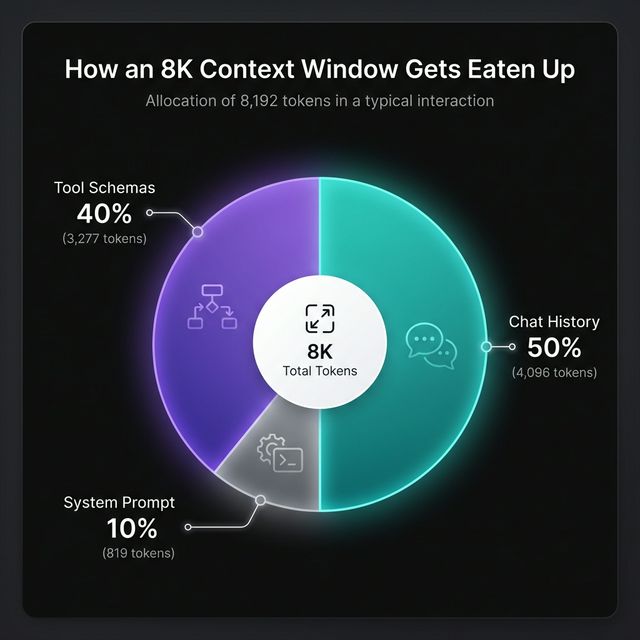

But with local models, the reality is very different. To maintain optimal inference speed and keep RAM usage in check, your context window shrinks dramatically — often to just 4K or 8K tokens. This creates a ruthless economy where every token is renting precious space in the LLM's brain.

The Tool Problem

Take tool usage. To build a capable agent, you might have 20 different tool JSON schemas. A single web-search tool schema costs roughly 250 tokens. Multiply that by 20 tools, and you've instantly burned 5,000 tokens just to teach the AI what it can do.

As a result, there's no room left for the user's chat history, the files they just dropped in, or the agent's scratchpad. The model becomes an amnesiac expert: it knows its tools, but has completely forgotten why to use them.

Ruthless Context Management

Building AICoven meant we couldn't just append features statically. We had to build an orchestration layer that dynamically loads only the relevant tools per turn, based on both the current objective and the phase of the autonomous loop (planning vs. execution vs. reflection).

This isn't just prompt engineering — it's the backbone of local AI orchestration.

Where We Are Today

In the spirit of full disclosure: we haven't solved this perfectly yet. Context window management is an ongoing, active battleground in AICoven's development.

Right now, our orchestration layer relies on deterministic phases to swap context in and out. If the agent needs to search the web:

- Load the web-search tools.

- Execute the search.

- Drop the raw HTML results from the prompt.

- Keep only a summarised memory of what was found.

- Move to the next step.

That summarised memory looks something like this:

{

"source": "https://docs.example.com/api",

"summary": "REST API uses Bearer auth, rate-limited to 100 req/min, pagination via cursor param",

"retrieved_at": "2026-02-25T07:12:00Z"

}

But friction remains:

- Sometimes the local model tries to recall a dropped detail and hallucinates, because we pruned too aggressively.

- Other times, a conversation drags on and we hit the token ceiling, forcing the app to truncate chat history just to keep working.

Semantic Pagination

Our current focus is semantic pagination. We use local, extremely fast embeddings (FastEmbed running natively via Metal) to silently query your chat history in the background. Instead of keeping your entire conversation loaded in the active context, the agent loads only the specific messages that semantically match your current query.

This creates the illusion of infinite memory within a tiny token budget.

If you're building something similar and want to share war stories, we're @aicoven — or come poke around the beta.

About the Author

I'm Andreea, the creator of AICoven. I build local-first tools for developers who care about architecture, privacy, and prompt economics.

See more of my work at papillonmakes.tech →