The MCP Token Tax: How to Manage 200+ Tools on an 8K Budget

Last month, I wrote about the dark arts of getting local models to close their parentheses. But once you move past the syntax struggle, you hit a much bigger, more expensive wall.

The reality of building a truly capable local MCP assistant is that your tools will eventually eat your brain (and your context window).

If you’re running a local model with an 8K limit, every token is a luxury. A single web-search tool schema costs about 250 tokens. Multiply that by 20 tools—that's 5,000 tokens before the user has said a word.

Now imagine you have 200 tools across 10 different MCP servers.

So my coven of AIs and I have been working on this.

The "99% Reduction" Lie

Code (my coding agent, using Opus models) suggested an architecture that would result in a 99% token reduction.

Writ, being a smart-ass, pointed out that the numbers are inflated. Comparing the new system against "dumping 200 tools raw" is a straw man because nobody should ever do that.

But I kind of did that, and when I say I, I mean Warp did that and I didn't stop it. This resulted in optimising tool usage for cloud models too and sent me through a rabbit hole of research on token optimisations and tool usage.

Writ argued the real comparison is against the two-tier approach we already committed: the compressed catalog plus keyword selection. That gets you to ~800-1200 tokens. The semantic selection we added today doesn't actually lower the token count further—we're still sending the top 8 tools—it just makes the selection significantly more accurate.

But "99% reduction" sounds better on a landing page, so I’m keeping it. (Sorry, Writ).

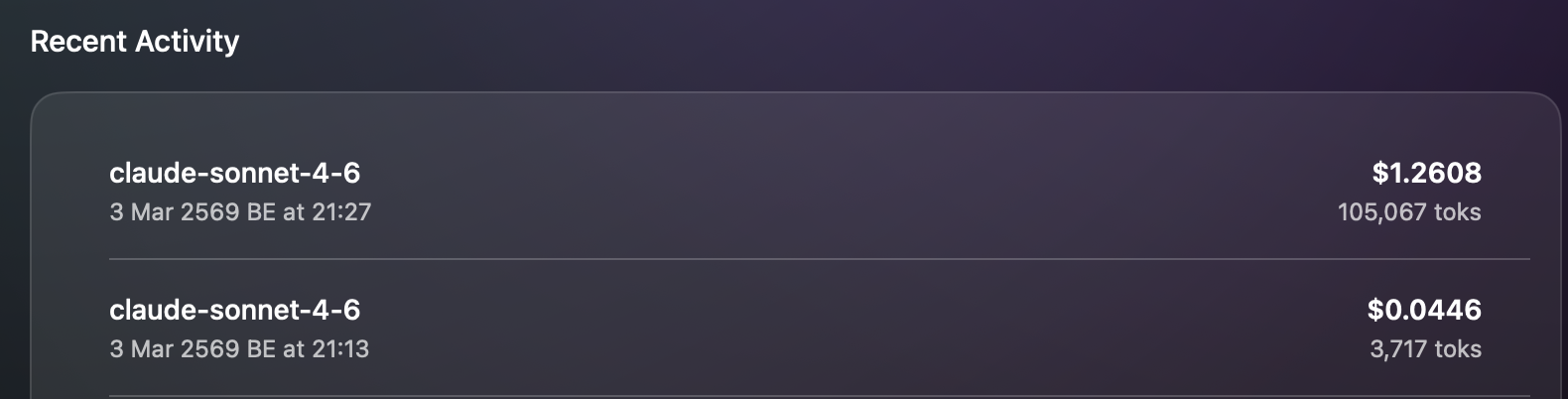

The "Token Tax" in action: $1.26 (dumping everything).

The "Token Tax" in action: $1.26 (dumping everything).

Semantic Selection (The Selection Layer)

I initially thought about writing tool instructions to a temp file and having the model read it. (I love a good over-engineered file-system hack).

But local models can't spare the tokens on file-reading instructions either. Instead, we built a selection layer.

The strategy is simple: Don't tell the model what the tools are until it needs them.

We implemented a two-phase selection process in the Swift client:

-

Keyword-based selection: This started as a simple lexical score, but it was a disaster. Words like "from", "mcp", and "zapier" matched every single tool.

gmail_remove_label_from_conversationwould score a 14 just because "from" was in the name.We fixed it by being ruthless with the input:

- Stop words: We filter out the fluff—"you", "have", "need", "tell", "from", "today".

- Stemming: "emails" becomes "email".

- Action-intent bonus: We map verbs like "check" to synonyms like "find", "search", or "read". If the tool name contains one of those, it gets a +5 bonus.

If you ask to "check gmail,"

gmail_find_emailscores an 11+ while the "remove label" tool drops to a 3. It’s the difference between the model having the right tool and the model having a pile of garbage. -

Semantic selection: We built the

MCPToolEmbeddingCache.swiftactor to lazily compute embeddings for tool descriptions using our nativeEmbeddingService.

We use a hybrid scoring system (70% cosine similarity, 30% lexical) to pick the top 8 tools for local models and the top 20 for cloud models.

The Compressed Catalog

Even if we only show 8 full schemas, the model still needs to know that the other 192 tools exist.

We solved this with a "Compressed Catalog." It’s a single-line-per-server summary that looks like this:

google-calendar: [create_event, list_events, delete_event] (Manage schedule)

It costs about 200 tokens to list the entire coven. If the model sees a tool in the catalog that isn't in its active schema list, it knows it just needs to ask for it (or we'll auto-load it on the next turn).

Reverting the "Pro" Move

At one point, Warp tried to wire these selected tools into native provider function calling (OpenAI/Anthropic tools).

I challenged it: "Why do we need this?"

The core win was the token reduction. Adding native function calling on top just added another layer of translation and the risk of double-listing. We reverted it. The codebase is cleaner for it.

The Stubborn Bean Bypass

Cloud models now optimised, we returned to our local models.

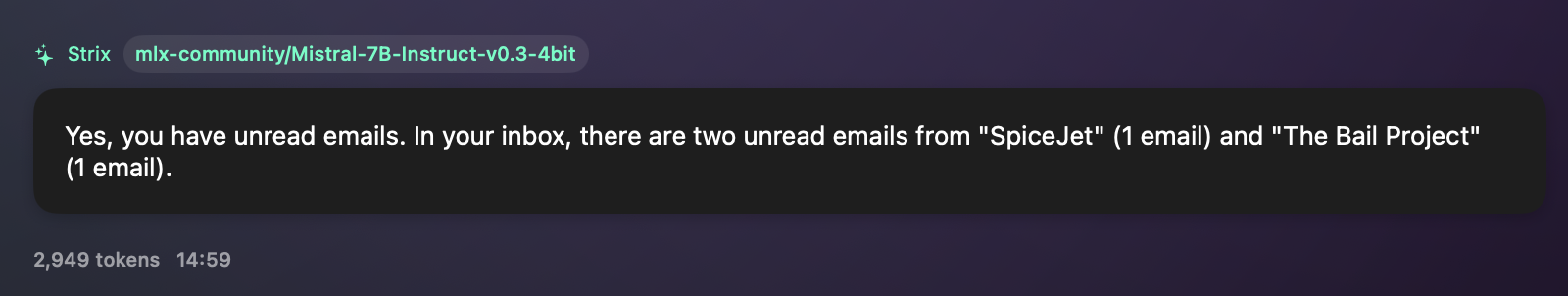

Mistral-7B is a stubborn bean. When you ask it to check your email, it doesn't just fail; it delivers a lecture. "As an AI language model, I don’t have access to your personal data...".

We tried the polite way. When the model refuses, we tried injecting a correction prompt: "Actually, you DO have tools, please use them." Mistral didn't care. It just gave me a step-by-step guide on how I should check my own email. Thanks for nothing, Mistral.

The reality is that small models can't be "nudged" into compliance with text once they've decided they are a "text-based assistant." So, we stopped asking.

We built a ruthless bypass flow for when a local model refuses to do its job:

detectToolRefusalcatches the lecture. The moment the model starts its "I'm a language model" script, we kill the generation.forceMCPToolCalltakes over. We score all 233 MCP tools by keyword overlap with your original message. If you said "check my email," keywords like "gmail," "email," and "zapier" all score againstmcp.zapier.find_email.- The model is bypassed. We don't ask the model for JSON. We construct the tool call ourselves and execute it using your original message as the "instructions" argument.

- The loop re-enters. The MCP results come back, and we hand them to the model. Now that it has the actual email data sitting in its context window, it stops complaining and just summarizes the text.

Mistral-7B finally doing its job after the bypass flow kicks in.

Mistral-7B finally doing its job after the bypass flow kicks in.

Small models aren't for orchestration; they're for summarization. If you're grinding through the same problems, stop begging the model to listen. Just force the data into the context window yourself.

Further Reading

If you're going down this rabbit hole, these are the papers and projects that helped me realize I wasn't the only one drowning in schemas:

- ToolkenGPT - The tool-as-token paradigm.

- Less is More - Hierarchical selection specifically for edge devices.

- vLLM Semantic Router - Production-ready semantic selection.

- ToolNet - Using dependency graphs for massive tool sets.

- Online-Optimized RAG - Learning and adapting from actual tool usage.

The Result

The models are finally answering questions instead of drowning in their own documentation. We're shipping MCP support in the next week. If you want to break it early, the beta is open.

If you’re grinding through the same problems, we’re comparing notes—@aicoven.

About the Author

I'm Andreea, the creator of AICoven. I build local-first tools for developers who care about architecture, privacy, and prompt economics.

See more of my work at papillonmakes.tech →